It’s coursework day for me and I did two things: Watched the video on Applications, Algorithms and Data: Open Educational Resources and the Next Generation of Virtual Learning and I sorted harvesting the course feeds in both Feedly and gRSShopper, which was a suggested task to go with this week. This post is divided into two sections, to address each of these in turn and I promise to use headings so it is easy to navigate (that means if you want to skip to whatever interests you, that’s ok too.) My earlier posts for #el30 can be found HERE.

Part 1

Notes on the video:

(This presentation was almost exactly a year ago, to me it is nice to think that this has been ‘cooking’ as part of the course for some time. I appreciate that.)

- Jupyter notebooks work without the internet.

Stephen asks: Why do we think VLS would only be used on the web? –They can be used anywhere. (1.40.00 in the vid)

OH SO TRUE! It makes me think of the classic Marshall McLuhan & Quentin Fiore book The Medium is the Massage. Although everything we do tends to entropy, we also cling to habit and stasis and it’s rare to find forward-thinkers, as they don’t fit in boxes. (my words, not theirs)

Portability. This is fantastic in principle, although there are risk issues with usb sticks (security, and they degrade- sometimes unpredictably. However, the idea of pointing to a URL that is housed somewhere else (cloud) is a wonderful solution.

At 1.56.30 in the video I got it. Stephen says Tony Hirst is supplying students with virtual machines, and on the virtual desktop there is already loaded all the Jupiter notebooks they need for their classes. That makes sense, and somehow I think either I missed that or it went over my head in their video for the course.

So if you have a Docker environment (to provide and runs the containers), you can load all your Jupyter stuff into a container, and can then run it off a cloud. That means it doesn’t have to be on your machine. The other option is installing everything natively – on your machine – which means you need to download all the individual things ‘kernals’ and that takes both space and know-how.

Why containers are useful?

They allow the builder to point the student to what you (builder/course designer) want them to learn without the faff of the other stuff. For example, if I’m teaching Psychology of Learning and Teaching in Music (which I am in January) I could make a container with all sorts of things from stats programmes to content and other tools and it would both skip the need for the students to learn to download and install all the individual ‘stuff’ and it abstract the individual user’s equipment – by that I mean it bypasses the potential hazard of the student saying ‘it doesn’t work on my machine because I have a Mac/PC, my computer doesn’t do that’, because it does do that if the container does that as it is no longer reliant on ‘your’ machine to run.

Stephen is suggesting the sharing of containers as OER to be part of a distributed, yet decentralised framework.

Part 2

Harvesting the course feeds

Stephen posted all that people need to do this. He posted the file we need HERE and also a fully step-by-step video demonstrating how to gather the feeds, in two different environments.

I followed the screens and went one step at a time, typing the URLs as they were called out in the video. I was worried at first that bringing up the home page (3.28) was an intrusion and maybe something I shouldn’t have typed, but it has LOADS of links on it and it took me straight to Feedly. I don’t think Stephen would have shown it if we weren’t allowed to type it.

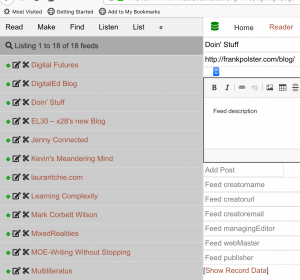

With Feedly

I did hav e to take baby steps. For example saving the page didn’t mean saving the URL somehow (this was the bit that the recorder didn’t allow to be shown), but meant saving the page as a file. I was trying to right click on the URL (I know, rookie mistake, but doing this on my own without even more help and figuring out these little things is useful for me.) Feedly was really easy and fortunately the ‘import OPML’ was on the front page for me. I suppose this is because I had just signed up, and so had no content yet. So if you’re creating an account, that bit should be easier to find than it was on the video. I love the hashtag functionality and was worried that if #el30 posts are one (of many) pages on my website, would people essentially be spammed with things they are not interested in? It’s nice to know they don’t have to be. 🙂

e to take baby steps. For example saving the page didn’t mean saving the URL somehow (this was the bit that the recorder didn’t allow to be shown), but meant saving the page as a file. I was trying to right click on the URL (I know, rookie mistake, but doing this on my own without even more help and figuring out these little things is useful for me.) Feedly was really easy and fortunately the ‘import OPML’ was on the front page for me. I suppose this is because I had just signed up, and so had no content yet. So if you’re creating an account, that bit should be easier to find than it was on the video. I love the hashtag functionality and was worried that if #el30 posts are one (of many) pages on my website, would people essentially be spammed with things they are not interested in? It’s nice to know they don’t have to be. 🙂

With gRSShopper

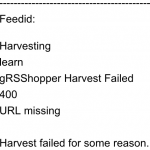

When I followed all the steps the first time, it said that everything failed and I wasn’t sure why. Actually I’m not sure it did fail, but I did get an error message.

Going back and re-examining the video, I noticed that Stephen had various boxes ticked as ‘yes’ on the Harvester page. For example, I had the default setting where harvester was set to ‘no’, and so I turned this on, and also turned on the audio settings. I will look into the urls that Stephen had under the audio settings (see 8.00 in the vid) as I think I may eventually want those. -like I said when I installed gRSShopper, I have not read all about how to use it and there is LOTS of information about how-to, so this is not a flaw of the system – just that I don’t know how to use it yet!

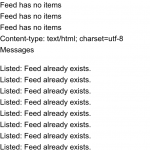

I had thought it failed, but when I compared screens, Stephen had the same error message but it worked for him, and when I did the next steps of finding the feeds – they were all there! At that point I was really pleased he said that you wouldn’t get double feeds, as I had tried the import from the file, the URL, and the file again and indeed there were no duplicates.

I had thought it failed, but when I compared screens, Stephen had the same error message but it worked for him, and when I did the next steps of finding the feeds – they were all there! At that point I was really pleased he said that you wouldn’t get double feeds, as I had tried the import from the file, the URL, and the file again and indeed there were no duplicates.

Task-o-riffic! I was very pleased that it worked in both places. If the task was a game, I am sure a pop-up would have appeared to tell me I had levelled up some aspect of something. Even without a pop-up, I smiled. 🙂